Does Your AI Have "Brainrot"? The Hilarious (and Terrifying) Truth About LLM Decay

From subtly silly responses to full-blown digital delirium, we explore why your favorite AI might be losing its mind, and why startups are losing their shirts.

You've seen the headlines: "AI will write your emails!" "AI will create art!" "AI will solve world peace!"

But lately, you might have also seen screenshots like this:

If your AI started talking like a spam email from 2003, you're not alone. Welcome to the wonderful, bewildering world of "LLM Brainrot."

It's a catchy, slightly sarcastic term that's quickly becoming the go-to phrase for when large language models (LLMs) — like ChatGPT, Gemini, Claude, and others — start to get a bit… weird. They become less helpful, more repetitive, prone to errors, or just plain unhinged.

Think of it like that friend who started out sharp, witty, and always had the perfect comeback, but after months of endless online scrolling and too much late-night pizza, they're now just quoting memes from 2010 and forgetting where they put their keys.

What is LLM Brainrot, Really?

In proper tech terms, LLM Brainrot (or "model degradation" if you want to be boring) refers to the observed decline in the performance and coherence of AI models over time. This isn't about them "getting tired" in a human sense, but rather a combination of factors that can lead to a less reliable, less intelligent AI.

There are a few leading theories on why our digital companions are developing a case of the digital dumbs:

1. The "Data Contamination" Theory (AKA: Eating its Own Tail 🐍)

The scariest idea! LLMs learn by reading vast amounts of text from the internet. But what happens when the internet starts getting flooded with text written by other LLMs?

It's like feeding an artist nothing but copies of their own work. Eventually, the art gets stale, repetitive, and loses its originality. Studies from researchers at Stanford and Google have shown that training models on AI-generated data can lead to "model collapse" — where the AI slowly forgets how to generate diverse or accurate information. It's an ouroboros of mediocrity.

Insight: If future AIs are learning from today's AI, which might already be slightly 'brainrotted', we could be entering a feedback loop of diminishing returns.

2. The "Over-Optimization" Theory (AKA: Too Many Cooks 👨🍳)

Remember all those safety guardrails and "alignment" efforts? Companies are constantly tweaking their LLMs to be safer, less biased, and more helpful. This involves a process called Reinforcement Learning from Human Feedback (RLHF).

While crucial, too much fine-tuning can sometimes lead to unintended consequences. It's like trying to make a highly specialized racing car safe for city driving — you might lose some of its original performance or intelligence in the process of adding all those bumpers and speed limits. The AI might become too good at avoiding certain topics or phrasing, and in doing so, loses its ability to generate nuanced or creative responses.

Insight: There's a delicate balance between making an AI safe and making it smart. Too much "safety" can sometimes dilute its core intelligence.

3. The "Feature Creep & Model Drift" Theory (AKA: The Swiss Army Knife Dilemma 🔪)

LLMs aren't static. They're constantly being updated, patched, and given new abilities — image generation, web browsing, coding assistants, voice capabilities. Each new feature adds complexity and can sometimes pull the model's "focus" in different directions.

Imagine trying to be a world-class chef, a competitive swimmer, and a rocket scientist all at once. You might be decent at all, but excellent at none. The core language generation ability can "drift" as the model tries to accommodate more and more tasks.

Insight: The quest for a "do-it-all" AI might come at the expense of its core linguistic prowess.

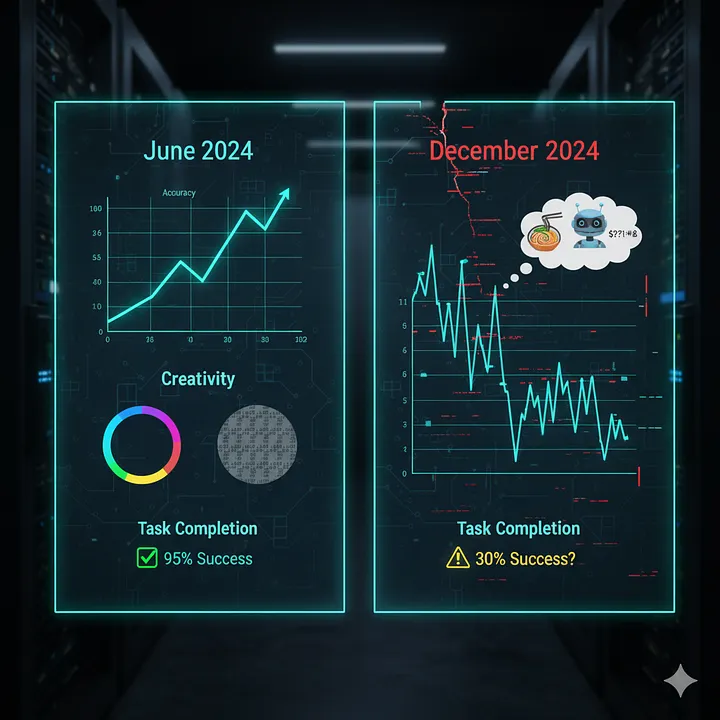

Real Data & Insights: Is Brainrot Really Happening?

Yes, absolutely. Here's what some of the big players have shown (and what users are reporting):

Stanford & UC Berkeley Study (2023): Researchers specifically tracked GPT-3.5 and GPT-4. They found that in just a few months (March to June 2023), GPT-4's accuracy on math problems dropped from 97.6% to 2.4%, and its ability to follow instructions deteriorated significantly. GPT-3.5, conversely, sometimes improved in certain areas while degrading in others. It's like a digital whack-a-mole.

User Reports: Search "ChatGPT getting dumber Reddit" and you'll find countless threads. Users report models becoming lazier, giving shorter answers, requiring more prodding, and being more prone to repeating themselves. It's not just a feeling; many have documented examples.

Here's a simplified view of the observed shifts:

The Startup Graveyard: How LLM Brainrot Kills AI Companies

This isn't just a funny anecdote for your Twitter feed; it's a serious threat, especially to the legions of AI startups.

Remember our previous chat about the "AI Cost Bubble"? Startups pour millions into licensing powerful LLMs via APIs (paying "by the word"). Their entire product often is just a clever wrapper around a foundational model like GPT-4 or Claude.

The deadly cocktail:

Variable Costs: If the underlying LLM gets worse, it requires more elaborate prompts, more re-runs, and more user interaction to get a good output. More interactions = more tokens = higher API costs for the startup.

Customer Churn: If the AI's quality drops, users get frustrated. "My AI writing assistant used to be brilliant, now it just repeats itself!" This leads to canceled subscriptions and a dwindling user base.

No Differentiation: If your startup's core value is simply providing access to a slightly tweaked version of a public LLM, and that LLM starts to rot, your entire value proposition disappears. You're selling a house built on sand.

Imagine your entire business model relies on a tool that's secretly getting worse month by month, driving up your costs and driving away your customers. It's an existential crisis that many smaller AI companies are already facing.

Can We Cure LLM Brainrot?

The good news is that researchers and AI companies are keenly aware of this problem and are working on solutions.

- Better Training Data: Finding new, diverse, human-generated data to keep models fresh.

- "De-decay" Techniques: Methods to actively combat degradation during fine-tuning or updates.

- New Architectures: Developing models that are more robust to these issues.

It's a complex problem, and there's no magic pill. For now, it means we, as users, need to be vigilant. Keep fact-checking, stay skeptical, and if your AI starts asking for "Free HAE HAT!", maybe it's time for a reboot (or at least a good laugh).

How Cost Katana Helps You Combat LLM Brainrot

At Cost Katana, we're acutely aware of LLM Brainrot and its impact on your bottom line. Here's how we help:

1. Smart Model Routing

When one model starts degrading, we automatically route your requests to better-performing alternatives, ensuring consistent quality without manual intervention.

2. Performance Monitoring

We track model performance metrics in real-time, alerting you when a model's quality drops below acceptable thresholds.

3. Cost Impact Analysis

We quantify how much model degradation is costing you in extra tokens, retries, and failed requests.

4. Version Pinning

Lock your applications to specific, tested model versions to avoid unexpected degradation from updates.

The takeaway: LLM Brainrot is real, it's weird, and it's a huge challenge for the future of AI. It reminds us that these powerful tools are still very much under construction, and sometimes, even digital brains need a mental health day.

Thanks for reading! Don't let your AI get brainrot. Unless it's funny. Then definitely share screenshots. 🤖🧠🤯

About the Author: Sourav Biswas is the Chief Product Officer at Cost Katana, where he leads product strategy for AI cost optimization. He's a tech entrepreneur, founder of Hypothesize, and is passionate about building the future of human-AI interaction. Connect with Sourav on LinkedIn

Worried about LLM Brainrot affecting your applications? Start monitoring with Cost Katana and protect your AI investments.

Tags

Found this helpful? Share it!

Related Articles

Continue your learning journey with these related insights

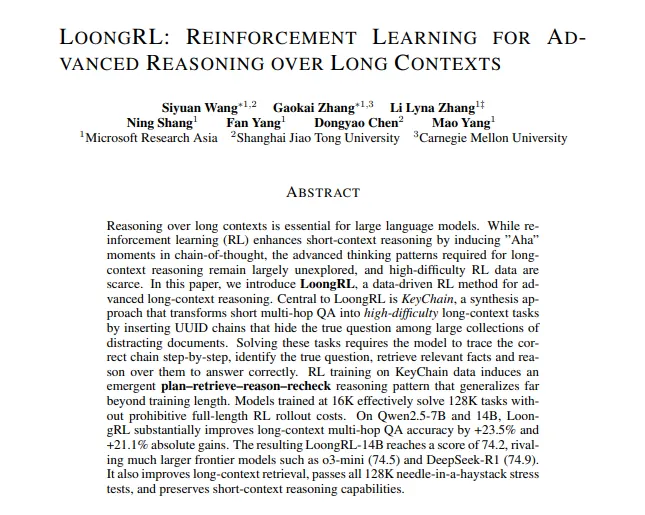

Forget LLM Brainrot: Introducing LoongRL

This new training method teaches AI to actually reason over massive documents, not just get confused. Here's how it works, in plain English.

Sourav Biswas

October 25, 2024

How Much Does '1 AI' Cost? A (Hilariously) Simple Guide to AI Pricing

From your $20 ChatGPT subscription to the billion-dollar 'AI cost bubble' that's making startups cry. We break it all down.

Sourav Biswas

October 21, 2024

From AI Chaos to Cost Clarity: Why We Built CostKatana

A journey from AI cost blindness to complete visibility—and why every AI-powered business needs this intelligence.

Abdul Sagheer

January 18, 2026